Batch Normalization (BatchNorm) Explained Deeply

Want Your Neural Network to Stop Throwing Tantrums? Batch Normalization Might Just Be the Parenting it Needs!

The Problem It Solves: Internal Covariate Shift

When training deep neural networks, one issue that often arises is called internal covariate shift. This refers to the fact that, during training, the distribution of the inputs to each layer keeps changing as the weights update. The input distributions (e.g., values passed to the next layer) can become unstable, making it harder for the model to converge and slower to train.

Why is this a problem?

As layers are trained, the parameters (weights) get updated, causing the activation values of each layer to change. This is especially problematic in deep networks where:

Early layers learn one thing, but then their activations change in later layers.

The model can struggle to adjust because the activation distributions keep shifting, which can slow down learning or even stop it entirely (known as vanishing gradients or exploding gradients).

What Does BatchNorm Do?

Batch Normalisation normalises the activations of each layer by adjusting and scaling the activations so that they stay in a consistent range throughout training.

Step-by-Step Explanation of BatchNorm:

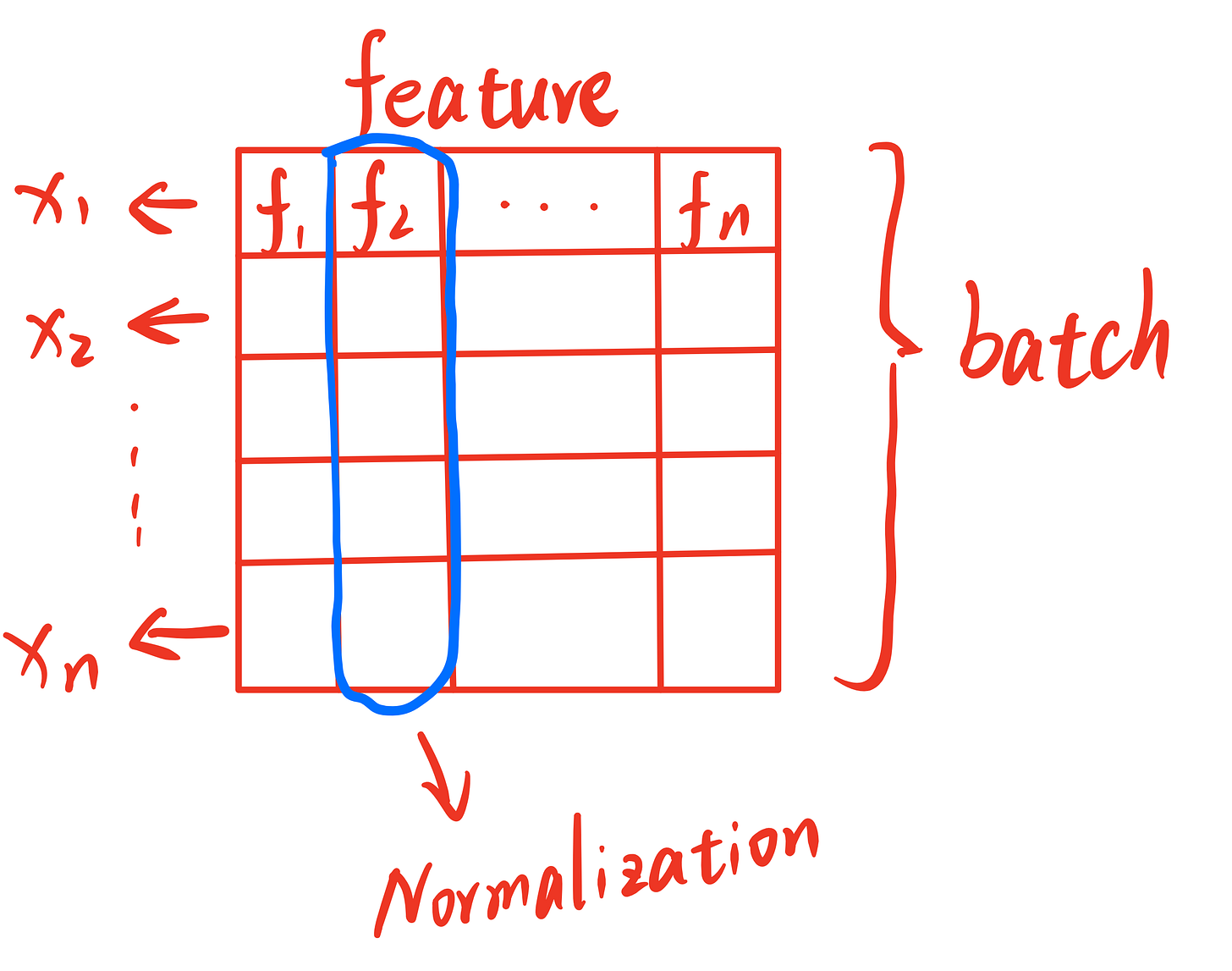

Step 1: Calculate the Mean and Variance of the Mini-Batch

In the training process, data is typically processed in mini-batches — small subsets of the data that are fed into the model at once. BatchNorm works by first calculating the mean and variance of the input values (activations) in each mini batch.

Mean: The mean is the average value of all the activations in the mini batch. It helps us understand the central tendency of the activations.

Variance: The variance measures how spread out the activations are from the mean. A higher variance means the activations are spread out more, while a lower variance means they are more tightly packed around the mean.

These values are calculated for each feature (or activation) across the entire mini batch, giving us a sense of how the activations behave across all examples in the batch.

Step 2: Normalize the Activations

Once we have the mean and variance, the next step is to normalise the activations. The goal here is to adjust the activations so that they have a mean of 0 and a variance of 1.

To do this:

Subtract the mean from each activation value.

Divide by the standard deviation (which is the square root of the variance) to adjust the scale.

By performing this normalisation, we ensure that the inputs to the next layer are centered and scaled, which helps the network train more effectively. This process makes the network less sensitive to the internal covariate shift and allows it to learn faster.

Step 3: Scale and Shift (Learnable Parameters)

Now, while normalisation helps stabilise the network, we still want to give the model the flexibility to adjust the scale and range of the activations. After normalisation, BatchNorm introduces two learnable parameters:

Scale parameter (γ): This allows the model to scale the normalised output. By learning the optimal scaling factor, the network can adjust how much emphasis each feature should have.

Shift parameter (β): This allows the model to shift the normalised output. By learning the best shift value, the model can move the activations to a more appropriate range for the task at hand.

These parameters (γ and β) are learnt during training, just like the weights of the network, and give the model the flexibility to adapt to the normalised values.

The final output of the BatchNorm layer is a combination of these scaled and shifted normalised activations.

Benefits of BatchNorm

1. Stabilizes and Speeds Up Training

Since each layer’s activations are normalised, training becomes more stable and faster.

The network doesn't have to worry about activation values getting too large or too small, so it can train at a higher learning rate, which speeds up convergence.

2. Improves Convergence

By reducing the internal covariate shift, each layer gets inputs that are more stable and easier to learn from.

Deep networks (with many layers) benefit the most because deep models are more prone to internal covariate shift.

3. Acts as Regularization

BatchNorm often reduces overfitting, especially when there’s limited data.

It can act similarly to dropout, in some cases, because it adds a form of noise to the activations that prevents the model from overfitting to the training data.

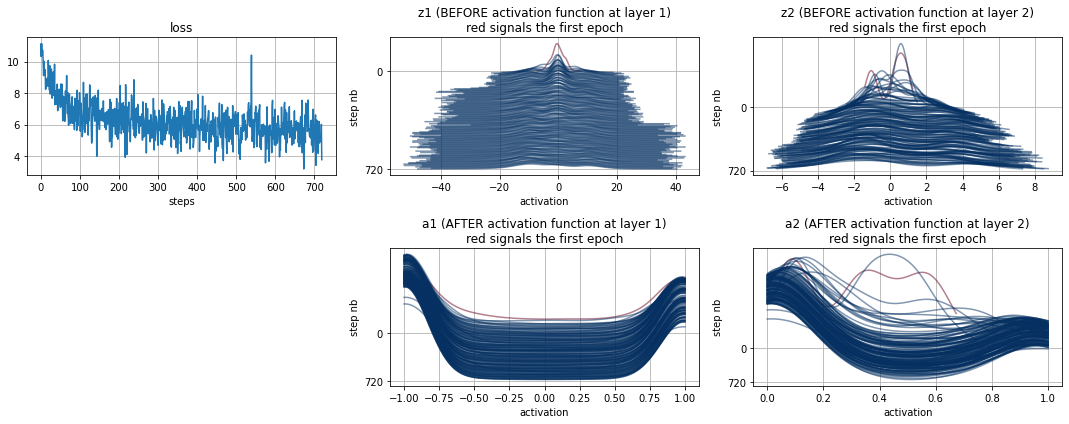

Image source: Fagonzalez 2021 - Batch Normalization Analysis. This article provides an in-depth analysis of batch normalization, which you can explore further for a more comprehensive understanding.

How and Where is BatchNorm Used?

After Convolution or Fully Connected Layers:

Typically, BatchNorm is applied after convolutional layers or fully connected layers, but before the activation function (like ReLU).

This helps maintain stable activations before non-linearities are applied.

During Training and Testing:

During training, BatchNorm uses the statistics (mean, variance) of each mini batch.

During testing, it uses a running average of the mean and variance from the entire training set to keep the model consistent.

Visualising BatchNorm:

Let’s visualise a typical neural network flow with BatchNorm:

Input Image → Conv Layer → BatchNorm → Activation (ReLU) → Dropout → Fully Connected → Output

Real-world Impact:

In industry, BatchNorm is used in almost all state-of-the-art models today.

Google’s Inception, ResNet, and VGG all use BatchNorm extensively.

It’s essential in deep networks with hundreds of layers, helping models to train efficiently and effectively.

💡 Quick Final Thought:

BatchNorm is the unsung hero of deep learning because it quietly does a lot of heavy lifting behind the scenes, keeping training smooth and fast without you having to micromanage the learning rate or initialisation.